@beck

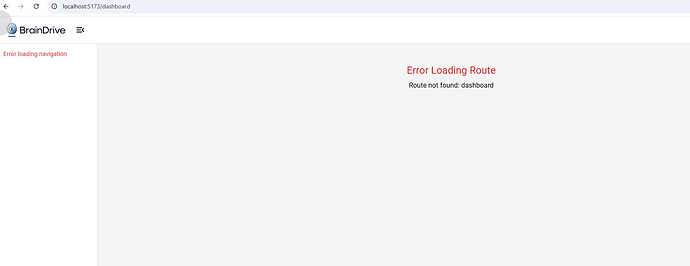

I couldn’t find any thing sticking out for the circular dependency but I did find a some areas I could clean up. I did a test run on a new db and no issues on my end.

"""add_hierarchical_navigation_support

Revision ID: 219da9748f46

Revises: cb95bbe8b720

Create Date: 2025-08-14 08:25:21.335545

"""

from typing import Sequence, Union

from alembic import op

import sqlalchemy as sa

from sqlalchemy import text

# revision identifiers, used by Alembic.

revision: str = "219da9748f46"

down_revision: Union[str, None] = "cb95bbe8b720"

branch_labels: Union[str, Sequence[str], None] = None

depends_on: Union[str, Sequence[str], None] = None

def upgrade() -> None:

"""Add hierarchical navigation support (SQLite-safe)."""

# ---- Phase 1: add columns ONLY (nullable first; no FK yet) ----

# Using recreate='always' to avoid SQLite edge cases

with op.batch_alter_table("navigation_routes", recreate="always") as batch_op:

batch_op.add_column(sa.Column("parent_id", sa.String(32), nullable=True))

batch_op.add_column(

sa.Column(

"display_order",

sa.Integer(),

nullable=True, # temporarily nullable; we'll tighten after backfill

server_default=sa.text("0"),

)

)

batch_op.add_column(

sa.Column(

"is_collapsible",

sa.Boolean(),

nullable=True, # temporarily nullable; we'll tighten after backfill

server_default=sa.text("1"),

)

)

batch_op.add_column(

sa.Column(

"is_expanded",

sa.Boolean(),

nullable=True, # temporarily nullable; we'll tighten after backfill

server_default=sa.text("1"),

)

)

# ---- Phase 1b: backfill NULLs and enforce NOT NULLs ----

conn = op.get_bind()

conn.execute(

text(

"""

UPDATE navigation_routes

SET display_order = COALESCE(display_order, 0),

is_collapsible = COALESCE(is_collapsible, 1),

is_expanded = COALESCE(is_expanded, 1)

"""

)

)

with op.batch_alter_table("navigation_routes", recreate="always") as batch_op:

batch_op.alter_column("display_order", nullable=False)

batch_op.alter_column("is_collapsible", nullable=False)

batch_op.alter_column("is_expanded", nullable=False)

# ---- Phase 2: create the self-referential FK in its own batch ----

with op.batch_alter_table("navigation_routes", recreate="always") as batch_op:

batch_op.create_foreign_key(

"fk_navigation_routes_parent_id",

"navigation_routes",

["parent_id"],

["id"],

ondelete="CASCADE",

)

# ---- Phase 3: indexes ----

op.create_index(

"idx_navigation_routes_parent_id",

"navigation_routes",

["parent_id"],

)

op.create_index(

"idx_navigation_routes_display_order",

"navigation_routes",

["display_order"],

)

op.create_index(

"idx_navigation_routes_parent_order",

"navigation_routes",

["parent_id", "display_order"],

)

# ---- Phase 4: seed / migrate data ----

your_braindrive_id = "yourbraindriveparent123456789012" # <= 32 chars

your_pages_id = "yourpagesparent1234567890123456" # <= 32 chars

# Create "Your BrainDrive" parent (idempotent)

conn.execute(

text(

"""

INSERT OR IGNORE INTO navigation_routes (

id, name, route, icon, description,

is_visible, creator_id, is_system_route,

display_order, is_collapsible, is_expanded,

created_at, updated_at

) VALUES (

:id, 'Your BrainDrive', 'your-braindrive', 'AccountTree',

'Core BrainDrive functionality and settings',

1, 'system', 1,

0, 1, 1,

CURRENT_TIMESTAMP, CURRENT_TIMESTAMP

)

"""

),

{"id": your_braindrive_id},

)

# Create "Your Pages" parent (idempotent)

conn.execute(

text(

"""

INSERT OR IGNORE INTO navigation_routes (

id, name, route, icon, description,

is_visible, creator_id, is_system_route,

display_order, is_collapsible, is_expanded,

created_at, updated_at

) VALUES (

:id, 'Your Pages', 'your-pages', 'CollectionsBookmark',

'Your custom pages and content',

1, 'system', 1,

1, 1, 1,

CURRENT_TIMESTAMP, CURRENT_TIMESTAMP

)

"""

),

{"id": your_pages_id},

)

# Migrate existing system routes under "Your BrainDrive"

route_migrations = [

("settings", 0, "Settings"),

("personas", 1, "Personas"),

("plugin-manager", 2, "Plugin Manager"),

("plugin-studio", 3, "Page Builder"), # rename

]

for route, order, display_name in route_migrations:

result = conn.execute(

text(

"""

UPDATE navigation_routes

SET parent_id = :parent_id,

display_order = :display_order,

name = :name

WHERE route = :route AND is_system_route = 1

"""

),

{

"parent_id": your_braindrive_id,

"display_order": order,

"name": display_name,

"route": route,

},

)

# optional: prints if you want runtime feedback in logs

# print(f"Migrated {route} -> {display_name}") if result.rowcount else print(f"Warning: {route} not found")

# Ensure "Prompt Library" exists under "Your BrainDrive"

conn.execute(

text(

"""

INSERT OR IGNORE INTO navigation_routes (

id, name, route, icon, description,

is_visible, creator_id, is_system_route,

parent_id, display_order, is_collapsible, is_expanded,

created_at, updated_at

) VALUES (

'promptlibrary123456789012345678',

'Prompt Library', 'prompt-library', 'LibraryBooks',

'Manage your AI prompts and templates',

1, 'system', 1,

:parent_id, 4, 1, 1,

CURRENT_TIMESTAMP, CURRENT_TIMESTAMP

)

"""

),

{"parent_id": your_braindrive_id},

)

# Bump display orders for any remaining root-level routes

conn.execute(

text(

"""

UPDATE navigation_routes

SET display_order = CASE

WHEN route = 'your-braindrive' THEN 0

WHEN route = 'your-pages' THEN 1

ELSE COALESCE(display_order, 0) + 2

END

WHERE parent_id IS NULL

"""

)

)

def downgrade() -> None:

"""Remove hierarchical navigation support (SQLite-safe)."""

conn = op.get_bind()

# Move children to root level; we cannot recover prior ordering, so use current values

conn.execute(

text(

"""

UPDATE navigation_routes

SET parent_id = NULL,

display_order = COALESCE(display_order, 0)

WHERE parent_id IS NOT NULL

"""

)

)

# Remove the parent routes we created

conn.execute(

text(

"""

DELETE FROM navigation_routes

WHERE route IN ('your-braindrive', 'your-pages')

AND is_system_route = 1

"""

)

)

# Drop indexes first (portable signature)

op.drop_index("idx_navigation_routes_parent_order", table_name="navigation_routes")

op.drop_index("idx_navigation_routes_display_order", table_name="navigation_routes")

op.drop_index("idx_navigation_routes_parent_id", table_name="navigation_routes")

# Drop FK and columns in a batch recreate

with op.batch_alter_table("navigation_routes", recreate="always") as batch_op:

batch_op.drop_constraint(

"fk_navigation_routes_parent_id", type_="foreignkey"

)

batch_op.drop_column("is_expanded")

batch_op.drop_column("is_collapsible")

batch_op.drop_column("display_order")

batch_op.drop_column("parent_id")

I am going to do a new install on bare system see if that exposes anything (This will be from the main repo and not my dev or with the above changes)