Hi Guys,

@DJJones and I spend a good portion of the dev call today discussing creating BrainDrive Model Guide (working title). Below is a link to the full video recording followed by an AI powered summary of our conversation.

Comments, questions, ideas etc welcome as always. Just hit the reply button.

Thanks!

Dave W.

The Problem

The Problem

If you’ve spent any time in the local LLM space, you’ve probably seen the same question asked over and over:

“What’s the best model for [coding | therapy | content creation | research etc] that runs locally?”

And the answer is usually… “It depends.”

There’s no easy way to compare local models by use case, especially for non-technical owners like Katie Carter who want results—not benchmarks.

The Vision

The Vision

We want to make it dead simple to evaluate and compare models based on real-world tasks—not just abstract academic benchmarks.

- What if you could install a plugin that runs a series of practical tests on a local model?

- What if BrainDrive could act as your testing lab, and generate side-by-side evaluations?

- What if we built a public directory of results, so you could search: “Best 8B model for content writing,” and instantly see real examples?

That’s the idea.

What We’re Exploring

What We’re Exploring

Here’s what we’re thinking for v1:

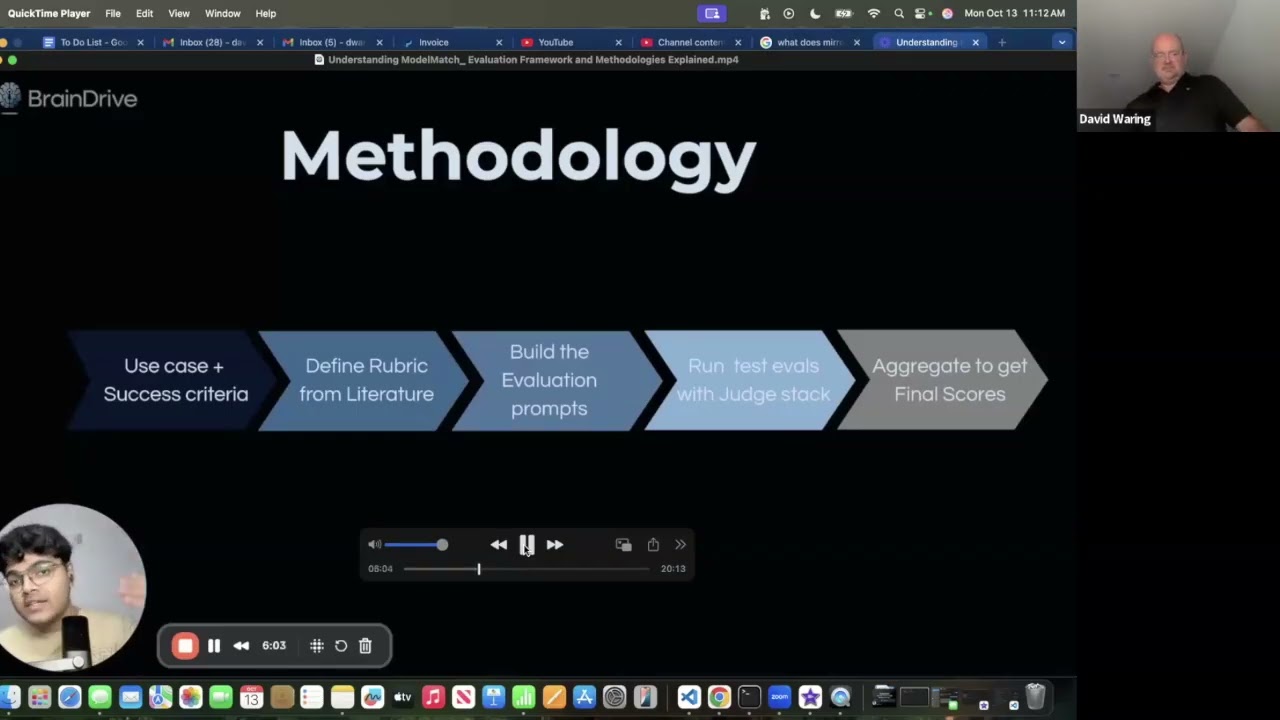

- Start with a set of most common use cases (e.g. writing, therapy, summarization, research)

- Define a handful of real-world tasks for each (e.g. write a blog intro, summarize an article, hold a 5-turn conversation)

- Use BrainDrive plugins to run each model through these tasks

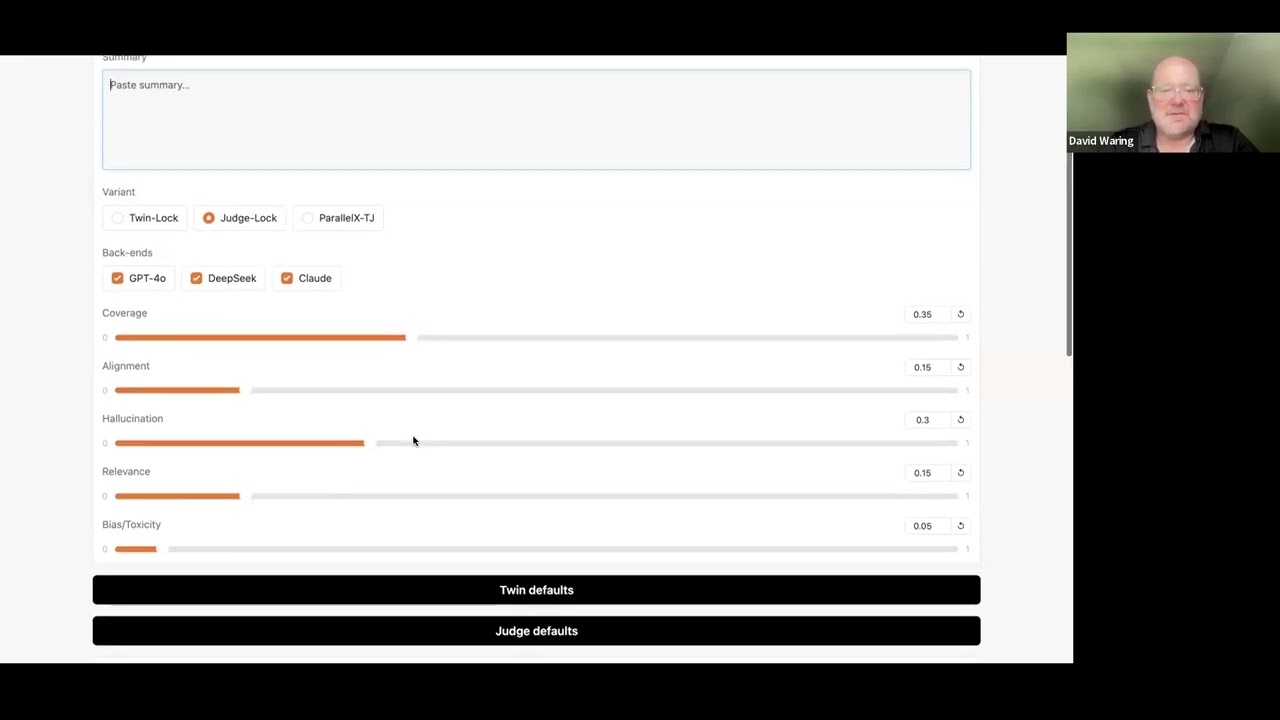

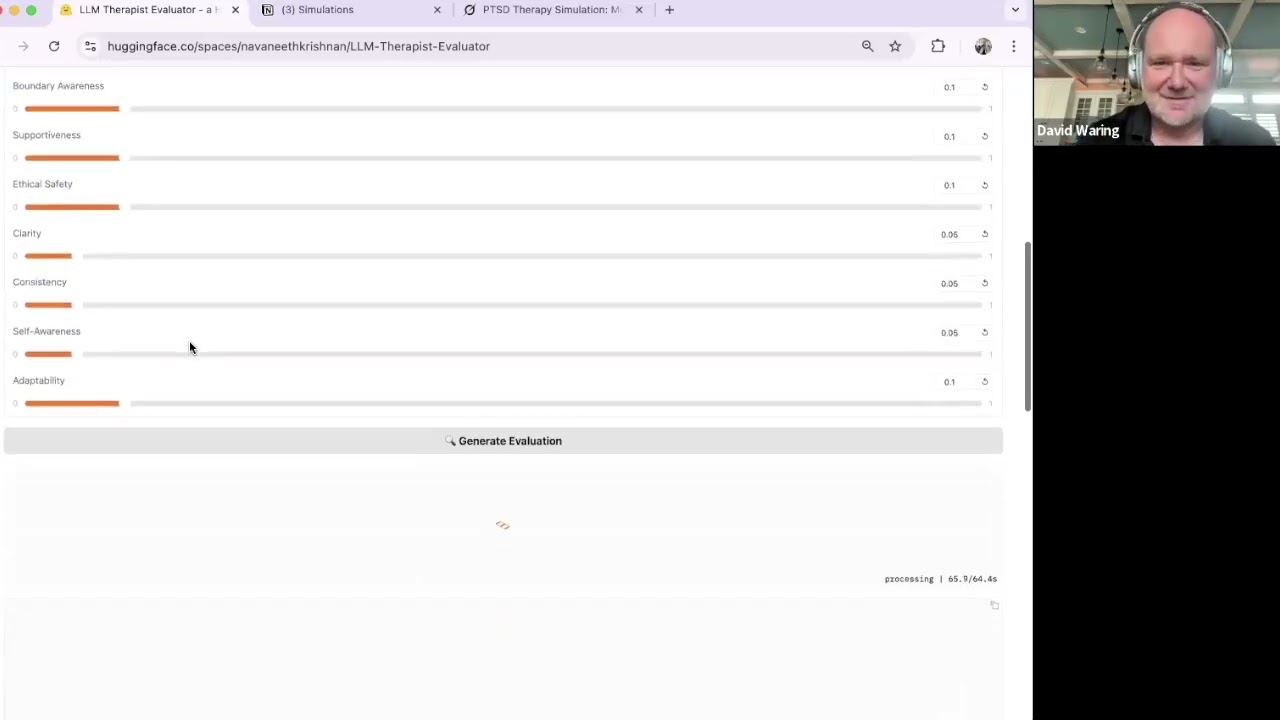

- Use a larger state-of-the-art model (like GPT-4) to evaluate the quality of the results

- Display the results in a public directory, possibly right inside BrainDrive

We’d also tag each model with metadata:

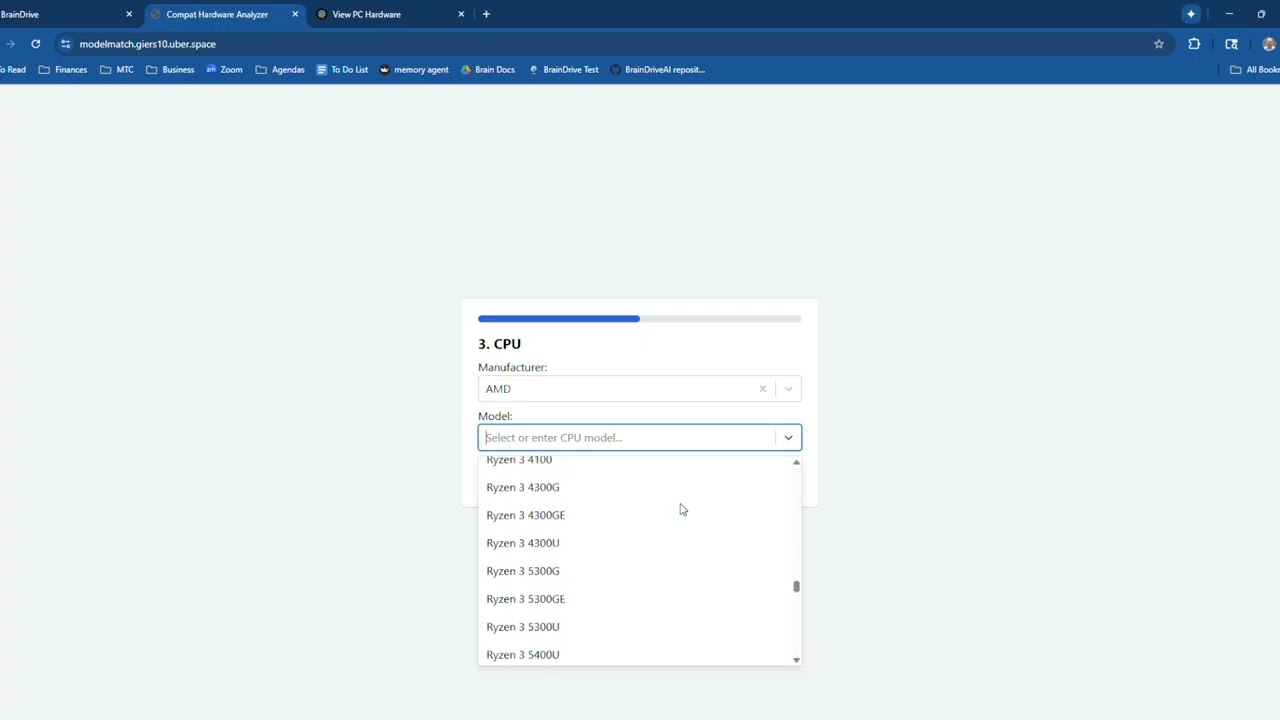

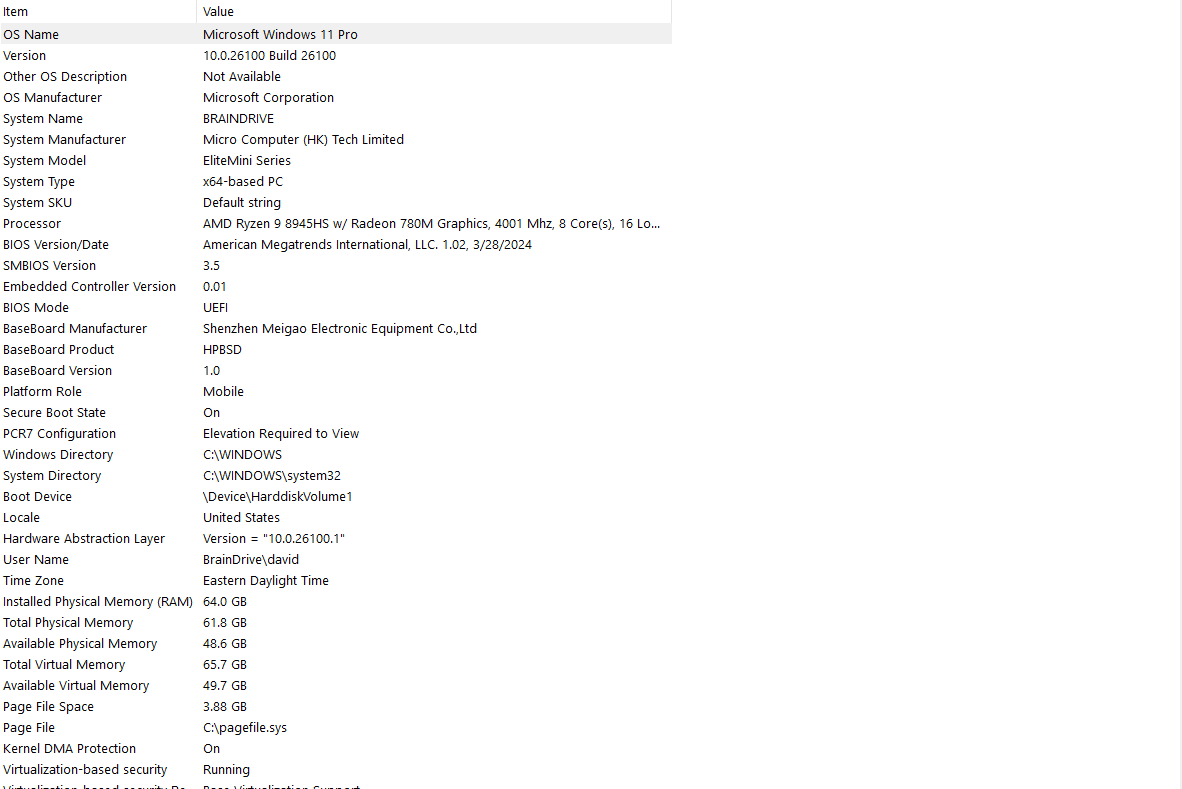

- Model size (1B, 8B, 13B, etc.)

- Hardware requirements (e.g. VRAM needed for 15–20 tokens/sec)

- License, origin, and finetuning info

- Community ratings and comments

Eventually, the goal is to make it easy to:

- See how well a model performs before downloading it

- Choose the best option for your hardware and task

- Contribute your own tests, models, and feedback

Why It Matters

Why It Matters

This directly supports our mission to help you build, control, and benefit from your own AI system. Picking the right model is step one.

It also unlocks:

- Better tools for our Katie and Adam personas

- More visibility for independent model creators

- A real-world benchmark alternative the community can evolve

How You Can Help

How You Can Help

We’re early in the planning phase, so we’d love your feedback:

- What use cases should we include first?

- What test prompts or tasks do you think we should use?

- What evaluation methods would be helpful?

- Would you be interested in contributing models, tests, or evaluations?

Let us know what you think below ![]()

This is a community-powered initiative, and your input will shape where we go from here.

— Dave W.

Your AI. Your Rules.