Hey everyone, Navaneeth here

Wanted to share an honest update on my first round of work with BrainDrive Core and the Why detector plugin. This covers what broke, how I fixed it, what I learned from talking with @davewaring and @DJJones , and what we finally decided for the first version of Why Finder.

1. Installing BrainDrive Core

Ollama confusion

First wall I hit was Ollama.

I reinstalled BrainDrive Core after a few months and things were acting weird. Models were not behaving as expected, and it took me a while to realise the obvious problem:

I had not installed Ollama separately.

From the repo you do not really get an in your face message that says

“You must install Ollama to run local models.”

How I fixed it

I manually installed Ollama on my machine, pulled the models, and after that the BrainDrive Core setup started making sense.

Takeaway and suggestion

There is already a plan from David to add a clear message in the AI chat page that tells new users to install Ollama. I strongly think the docs should also say this bluntly right at the top of the install section.

Something like

Step zero install Ollama or nothing else will make sense.

Conda vs system Python and Sentry error

Second issue was more annoying.

When I tried to run the backend with Uvicorn, I got an error about Sentry SDK not found, even though I had it in my Conda environment.

Root cause

Uvicorn was not using my Conda env at all. It was picking up the system Python and its site packages, which obviously did not have what I installed inside the BrainDriveDev environment.

What I did to fix it

-

Reactivated my Conda env properly

-

Reinstalled uvicorn inside that env so the correct Python interpreter was used

-

Ran the backend again from inside BrainDriveDev and the Sentry error disappeared

So this one was not really a BrainDrive bug, more of an environment confusion. But for anyone who jumps between pyenv, Conda, and system Python, this is a realistic footgun.

Possible doc improvement

A short note in the docs that says:

Run uvicorn only from inside the BrainDrive Conda environment.

If you see missing module errors like Sentry SDK, check that the active interpreter is the Conda one.

That would save some time for the next person.

2. Model weirdness in chat

While playing with one of the local models, I got this type of output:

“user that is great I think I will stick to spaghetti”

It looked like the model was replying as “user” to itself, which at first made me think BrainDrive was mislabelling roles.

On the call, Dave cleared this up. This is not a BrainDrive bug. This is quantised local models leaking training patterns. When these models are heavily quantised, sometimes they start spitting out the internal conversation format they saw during training.

Conclusion

Nothing to fix in BrainDrive for this.

The real fix is choosing better models or better quant settings and being explicit about recommended starter models for new users so their first experience is not spaghetti role names.

3. Database understanding

I also wanted to know where the data actually lives.

We confirmed on the call:

-

BrainDrive is using SQLite right now

-

The main database file lives in the backend folder

-

The location is controlled by the env file, so you can swap db files if you want different datasets or test setups

This is exactly what I needed. Simple, local, and not over engineered for a desktop style app.

Alembic is used for migrations, but from a plugin developer perspective I do not need to think too hard about it yet.

4. First plugin and dev workflow

Before touching Why Finder, I built a tiny plugin just to understand the flow.

I made a rock paper scissors plugin that just plays with you through the UI. Simple, but it forced me to understand:

-

Where to place plugin code

-

What to edit in the manifest

-

How to hook into the BrainDrive plugin system

Then Dave showed his actual development workflow, which is very useful in practice.

Key points that helped:

-

Create a plugin build folder inside BrainDrive Core and clone plugin repos there

-

When you are in dev mode, copy the dist build directly into the backend plugin shared folder so you do not have to reinstall the plugin every time

-

Use browser dev tools and disable cache when testing plugin changes, so React does not serve stale assets

-

Use the FastAPI docs at

http://localhost:8005/api/v1/docs

to understand all the backend endpoints that plugins can call -

For planning, write a short design brief and let your code assistant generate a markdown plan that both you and the AI can refer to

This whole setup makes plugin development much less painful.

Dave also shared the craft plugin repo which is basically a reference implementation. It shows:

-

How to use service bridges like API, settings, theme, and page context

-

How to store personas or user data

-

How to auto create a page so the plugin works right after install without manual page builder work

I will follow this pattern for Why Finder.

5. Scope reset for Why detector

Now the important part.

I originally came in with a huge plan for Why finder:

-

Discovery mode

-

Energy mapping

-

A second model verifying answer depth

-

Loops that re ask questions if the person is lazy with answers

-

Summaries for long term profiles

-

Decision mode for career choices and life choices

-

Database structures for storing all of this

In short I jumped straight to version three plus therapy without version one existing.

On the call, David cut this down to something much more direct and sensible.

What they actually want right now

For this phase the Why Finder plugin should:

-

Run a structured Q and A inside BrainDrive to learn about the person

Example

Ask about two or three life stories where they felt most alive or proud -

Use that conversation to generate a single clear Y statement in one sentence

Example

“My why is helping people unlock complex ideas and turn them into real systems that work in the real world.” -

Let the user react to that statement

Do you like this

What would you change

Possibly iterate once or twice to refine it

That is it. No extra storage logic beyond what BrainDrive already does with chat history. No new database tables. No heavy parallel model setup. Just a clean experience that helps someone discover a why statement for free.

Local models first

We agreed to try this with local models through Ollama first. If I hit a hard wall with quality or behaviour, then we can discuss calling API models instead. But the first attempt will be fully local.

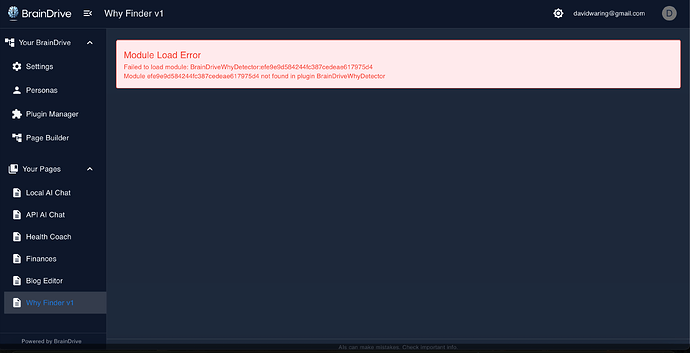

6. Final plan for Why Finder v1

Here is the concrete plan for this phase.

-

Plugin UX

-

When the user opens Why Finder they see a short intro explaining what this tool does and a simple safety note

-

They click start and the plugin guides them into a Q and A about a few key life stories

-

-

Conversation flow

-

The plugin uses one main model for the whole interaction

-

The model asks for two or three specific stories where the person felt energised or fulfilled

-

It asks follow up questions to dig into why those moments mattered

-

The structure is inspired by the existing Why detector site that David already built

-

-

Why statement generation

-

Once enough detail is collected, the model stops and generates a candidate Why statement in a single sentence

-

It reflects back what it heard from the stories

-

The user can say what feels right and what feels off

-

-

Light iteration

-

The plugin can offer one or two iterations based on user feedback

-

Goal is a final Why statement that the user feels seen by

-

-

Output

-

Final output is that single Why statement visible inside the plugin page

-

For this phase we rely on BrainDrive existing chat history and do not implement custom storage or profile systems

-

-

Later phases

Not in scope now, but my original ideas for energy mapping, decision mode, and deeper integration with profiles can live in future versions once we prove the simple version works and is valuable.

7. What is next

Next step for me

Build the actual Why Finder plugin so that a user can open it, go through the guided Q and A, and end up with a clear why statement that feels accurate.

Once that is working end to end, we can all iterate on polish and decide how far to push it in later versions.

If anyone else in the community hits similar install issues or wants to build plugins, feel free to reply here and I can share more specifics from my setup.