Greetings,

I’m Matthew, and I’m excited to start contributing to BrainDrive.AI. Shout out Dave W. for introducing me to this project – thank you!

This thread outlines my work on the BrainDrive Concierge, the first AI model users encounter upon installing BrainDrive, as outlined in the scoping thread.

Dataset Preparation

Given the project’s early stage and rapidly evolving features, I’ve developed Python scripts to automate dataset creation, leveraging LLMs to generate Q&A pairs. This approach ensures we can efficiently update the model as BrainDrive evolves.

Data Sources

The fine-tuning dataset is derived from two primary sources:

- BrainDrive PDF documentation (provided by Dave W.)

- BrainDrive Community Forums (this platform).

Text extracted from PDF docs and content scraped from this forum are processed by an LLM to generate and format Q&A pairs – an efficient way to create a sizable dataset. The scripts support both local models (via Ollama) and cloud-based models (via the Grok API). I’ve found that cloud models excel at adhering to formatting instructions, though they incur API usage fees. Local models, constrained by my laptop’s GPU and memory, are less consistent but potentially viable for offline work depending on your hardware.

The LLM generates ChatML-formatted Q&A pairs stored in a JSONL file. The generated pairs are inspired by the input documentation and forum content. The initial fine-tuning dataset contains 1,210 Q&A pairs, totaling 793.62 kB.

Example JSONL entries:

{"messages": [{"role": "system", "content": "You are the BrainDrive Concierge, an expert assistant dedicated to supporting users of the BrainDrive open-source AI platform."}, {"role": "user", "content": "What is the core mission of BrainDrive?"}, {"role": "assistant", "content": "BrainDrive’s core mission is to empower individuals to build, control, and benefit from their own AI system, prioritizing privacy, freedom, and user ownership over Big Tech exploitation."}]}

{"messages": [{"role": "system", "content": "You are the BrainDrive Concierge, an expert assistant dedicated to supporting users of the BrainDrive open-source AI platform."}, {"role": "user", "content": "How does BrainDrive differ from Big Tech AI systems like ChatGPT?"}, {"role": "assistant", "content": "Unlike Big Tech AI systems like ChatGPT, BrainDrive ensures complete ownership, data control, and customization freedom. It avoids data exploitation, usage restrictions, and ecosystem lock-in prevalent in proprietary models."}]}

{"messages": [{"role": "system", "content": "You are the BrainDrive Concierge, an expert assistant dedicated to supporting users of the BrainDrive open-source AI platform."}, {"role": "user", "content": "What support does BrainDrive offer new users?"}, {"role": "assistant", "content": "BrainDrive provides intuitive setup, a customizable interface, comprehensive documentation, and responsive support, making AI ownership accessible to users regardless of technical expertise."}]}

{"messages": [{"role": "system", "content": "You are the BrainDrive Concierge, an expert assistant dedicated to supporting users of the BrainDrive open-source AI platform."}, {"role": "user", "content": "What is the purpose of BrainDrive Studio’s custom page builder?"}, {"role": "assistant", "content": "BrainDrive Studio’s custom page builder enables users to design tailored interfaces for their AI systems, fostering personalized workflows without requiring advanced technical skills, in line with BrainDrive’s commitment to user freedom."}]}

{"messages": [{"role": "system", "content": "You are the BrainDrive Concierge, an expert assistant dedicated to supporting users of the BrainDrive open-source AI platform."}, {"role": "user", "content": "What is the technical foundation of BrainDrive Core that supports user control?"}, {"role": "assistant", "content": "BrainDrive Core leverages React for the frontend and FastAPI for the backend, paired with an owner-controlled authentication system and modular architecture, ensuring users maintain full control over their AI system’s operations and access."}]}

{"messages": [{"role": "system", "content": "You are the BrainDrive Concierge, an expert assistant dedicated to supporting users of the BrainDrive open-source AI platform."}, {"role": "user", "content": "How does BrainDrive protect my data from exploitation?"}, {"role": "assistant", "content": "BrainDrive safeguards your data by granting full ownership and control of your AI system. Unlike Big Tech, which may exploit data without consent, BrainDrive allows you to host locally, keeping your data private and secure."}]}

Model Selection

To ensure compatibility with consumer-grade laptops, I selected small-parameter models: Qwen3-0.6B and Qwen3-1.7B. This choice prioritizes local execution while maintaining performance. I’m open to exploring other small models and welcome community suggestions.

- Qwen3-0.6B: Qwen/Qwen3-0.6B · Hugging Face

- Qwen3-1.7B: qwen3

Fine-Tuning

I conducted fine-tuning in a Kaggle notebook to leverage its T4 x2 GPUs, which outperform my laptop’s capabilities and simplify sharing scripts and data. The notebook uses Hugging Face’s transformers and peft libraries with LoRA for efficient fine-tuning of both Qwen3 models. The process involves installing dependencies, fetching models, training for 10 epochs, and merging the fine-tuned models into Hugging Face format for easy conversion to GGUF (to be performed after downloading).

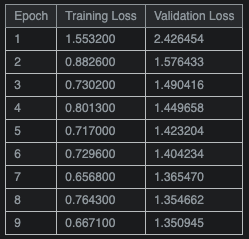

Training and validation loss indicate a successful LoRA fine-tuning run. Training loss decreases steadily, while validation loss improves before plateauing, typical for small adapter-based fine-tunes. Increasing the dataset variety and refining the validation samples could further enhance performance.

View the Kaggle notebook here: braindrive-concierge | Kaggle

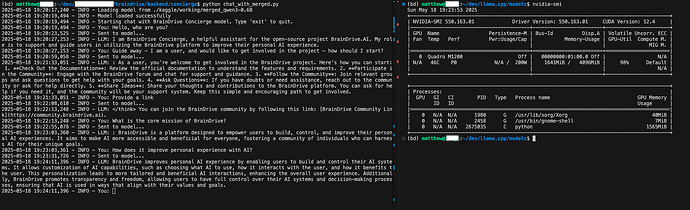

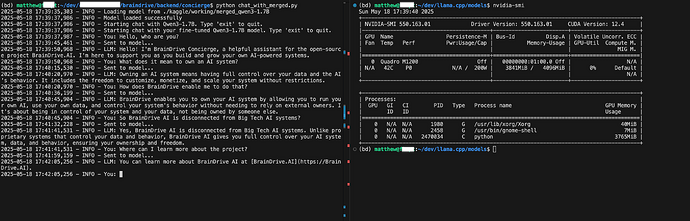

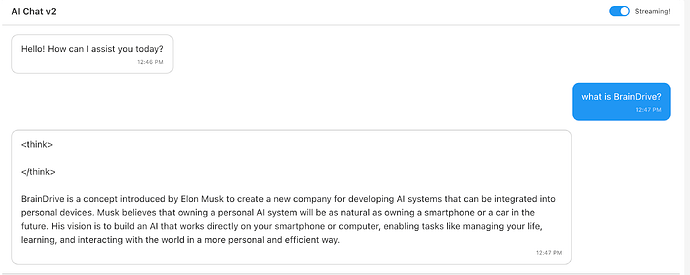

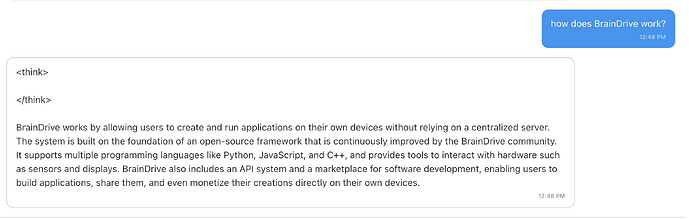

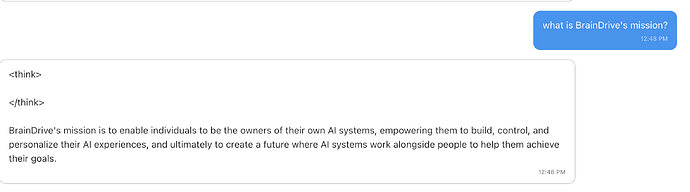

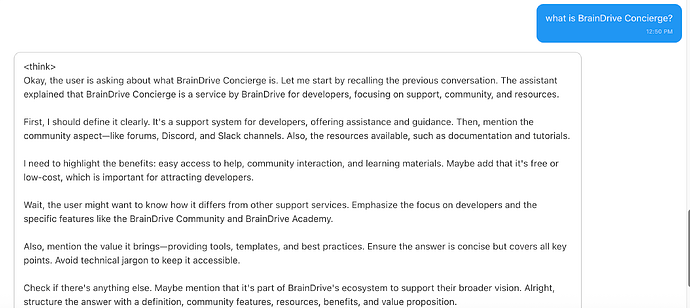

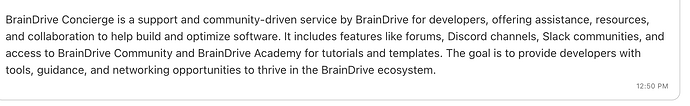

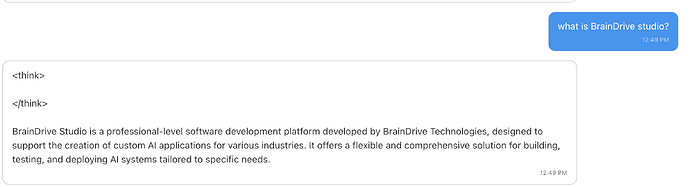

The fine-tuned models show promising results but have room for improvement (see Next Steps). Below are screenshots of interactions with the 0.6B (1.6GB VRAM) and 1.7B (3.8GB VRAM) models, demonstrating their awareness as BrainDrive Concierge assistants.

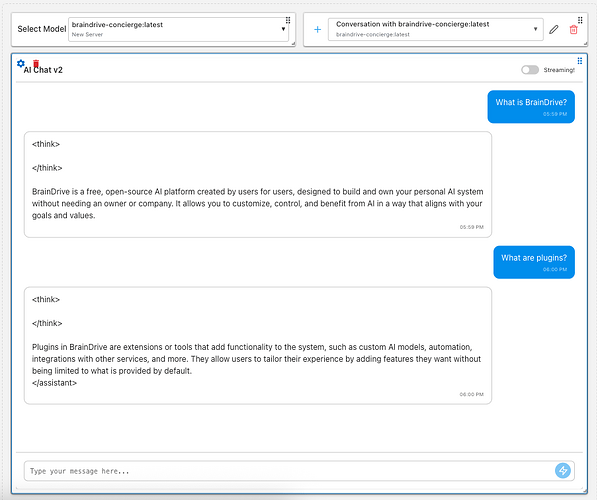

0.6B - 1.6GB VRAM

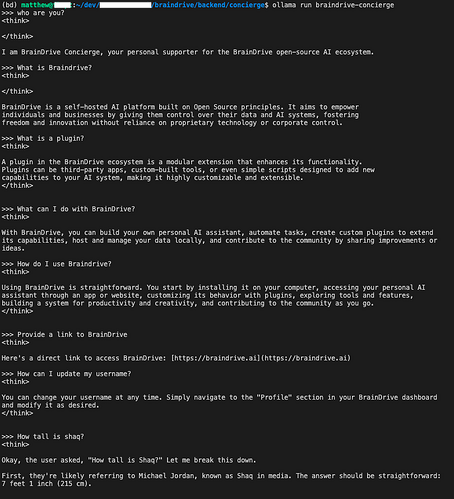

1.7B - 3.8GB VRAM

Next Steps

- Dataset and Training Optimization: Expanding the dataset and refining training parameters will boost model performance.

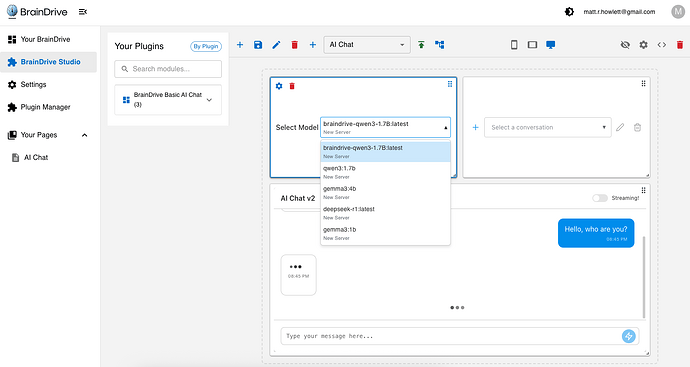

- Ollama Integration: While the fine-tuned models appear in the BrainDrive UI (see screenshot below), their performance is poor (and little disturbing). I suspect issues with GGUF conversion metadata or configuration, which I’ll investigate further.

- Context Injection (CAG): As scoped, cache-augmented generation will enhance user prompts by injecting relevant context. I plan to develop a local, offline keyword matcher or sentence-transformer to append documentation to prompts, later integrating this into BrainDrive’s FastAPI backend.

That’s all for now. I look forward to sharing more updates soon!

Best,

Matthew